Table of Contents Semantic Caching for LLMs: TTLs, Confidence, and Cache Safety Why Semantic Caching for LLMs Requires Production Hardening Cache TTL in Semantic Caching: Preventing Stale LLM Responses MLOps Project Structure for Semantic Caching with FastAPI and Redis How…

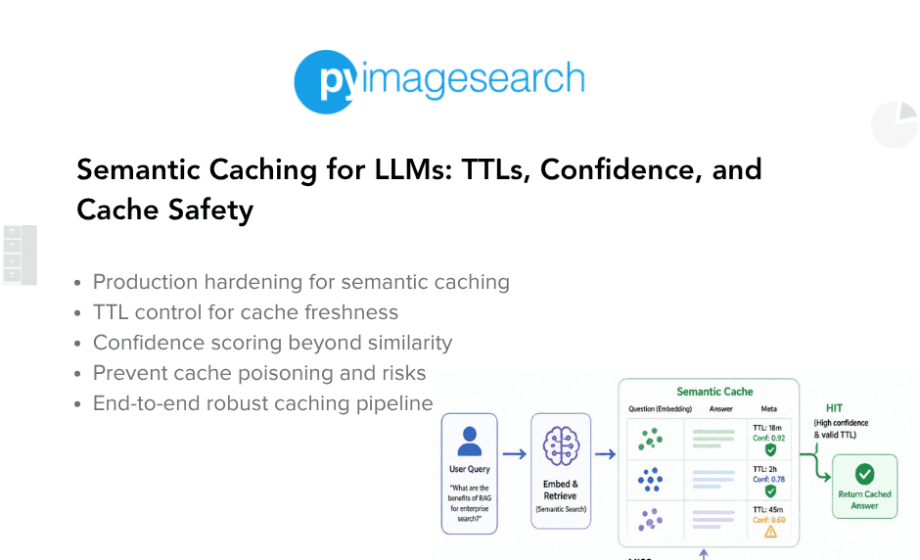

Semantic Caching for LLMs: TTLs, Confidence, and Cache Safety

Read More of Semantic Caching for LLMs: TTLs, Confidence, and Cache Safety

Machine Learning

Machine Learning