Last updated on December 30, 2022 with content updates.

In today’s blog post you are going to learn how to perform face recognition in both images and video streams using:

- OpenCV

- Python

- Deep learning

As we’ll see, the deep learning-based facial embeddings we’ll be using here today are both (1) highly accurate and (2) capable of being executed in real-time.

To learn more about face recognition with OpenCV, Python, and deep learning, just keep reading!

Having a face dataset is crucial for building robust face recognition systems. It allows the model to learn diverse features of human faces such as facial structure, skin tone, and expressions, which leads to improved performance in recognizing different individuals.

Roboflow has free tools for each stage of the computer vision pipeline that will streamline your workflows and supercharge your productivity.

Sign up or Log in to your Roboflow account to access state of the art dataset libaries and revolutionize your computer vision pipeline.

You can start by choosing your own datasets or using our PyimageSearch’s assorted library of useful datasets.

Bring data in any of 40+ formats to Roboflow, train using any state-of-the-art model architectures, deploy across multiple platforms (API, NVIDIA, browser, iOS, etc), and connect to applications or 3rd party tools.

- Update July 2021: Added alternative face recognition methods section, including both deep learning-based and non-deep learning-based approaches.

Face recognition with OpenCV, Python, and deep learning

Inside this tutorial, you will learn how to perform facial recognition using OpenCV, Python, and deep learning.

We’ll start with a brief discussion of how deep learning-based facial recognition works, including the concept of “deep metric learning.”

From there, I will help you install the libraries you need to actually perform face recognition.

Finally, we’ll implement face recognition for both still images and video streams.

As we’ll discover, our face recognition implementation will be capable of running in real-time.

Understanding deep learning face recognition embeddings

So, how does deep learning + face recognition work?

The secret is a technique called deep metric learning.

If you have any prior experience with deep learning you know that we typically train a network to:

- Accept a single input image

- And output a classification/label for that image

However, deep metric learning is different.

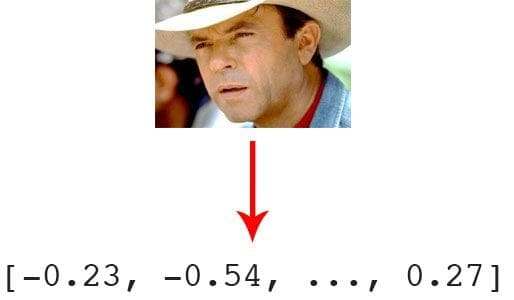

Instead of trying to output a single label (or even the coordinates/bounding box of objects in an image), we are instead outputting a real-valued feature vector.

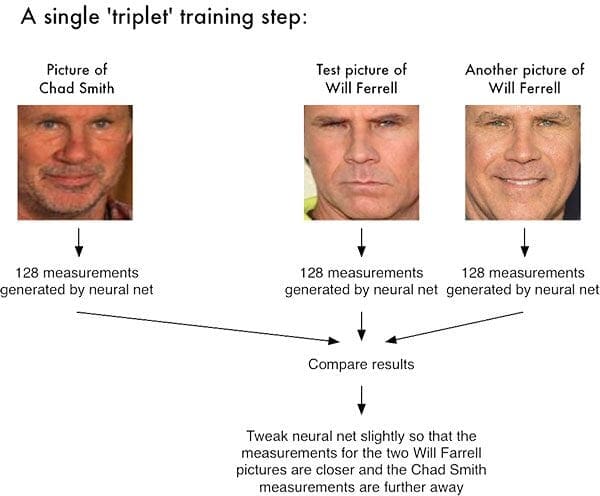

For the dlib facial recognition network, the output feature vector is 128-d (i.e., a list of 128 real-valued numbers) that is used to quantify the face. Training the network is done using triplets:

Here we provide three images to the network:

- Two of these images are example faces of the same person.

- The third image is a random face from our dataset and is not the same person as the other two images.

As an example, let’s again consider Figure 1 where we provided three images: one of Chad Smith and two of Will Ferrell.

Our network quantifies the faces, constructing the 128-d embedding (quantification) for each.

From there, the general idea is that we’ll tweak the weights of our neural network so that the 128-d measurements of the two Will Ferrells will be closer to each other and farther from the measurements for Chad Smith.

Our network architecture for face recognition is based on ResNet-34 from the Deep Residual Learning for Image Recognition paper by He et al., but with fewer layers and the number of filters reduced by half.

The network itself was trained by Davis King on a dataset of ≈3 million images. On the Labeled Faces in the Wild (LFW) dataset the network compares to other state-of-the-art methods, reaching 99.38% accuracy.

Both Davis King (the creator of dlib) and Adam Geitgey (the author of the face_recognition module we’ll be using shortly) have written detailed articles on how deep learning-based facial recognition works:

- High Quality Face Recognition with Deep Metric Learning (Davis)

- Modern Face Recognition with Deep Learning (Adam)

I would highly encourage you to read the above articles for more details on how deep learning facial embeddings work.

Install your face recognition libraries

In order to perform face recognition with Python and OpenCV we need to install two additional libraries:

The dlib library, maintained by Davis King, contains our implementation of “deep metric learning” which is used to construct our face embeddings used for the actual recognition process.

The face_recognition library, created by Adam Geitgey, wraps around dlib’s facial recognition functionality, making it easier to work with.

I assume that you have OpenCV installed on your system. If not, no worries — just visit my OpenCV install tutorials page and follow the guide appropriate for your system.

From there, let’s install dlib and the face_recognition packages.

Note: For the following installs, ensure you are in a Python virtual environment if you’re using one. I highly recommend virtual environments for isolating your projects — it is a Python best practice. If you’ve followed my OpenCV install guides (and installed virtualenv + virtualenvwrapper ) then you can use the workon command prior to installing dlib and face_recognition.

Installing dlib without GPU support

If you do not have a GPU you can install dlib using pip by following this guide:

$ workon # optional $ pip install dlib

Or you can compile from source:

$ workon <your env name here> # optional $ git clone https://github.com/davisking/dlib.git $ cd dlib $ mkdir build $ cd build $ cmake .. -DUSE_AVX_INSTRUCTIONS=1 $ cmake --build . $ cd .. $ python setup.py install --yes USE_AVX_INSTRUCTIONS

Installing dlib with GPU support (optional)

If you do have a CUDA compatible GPU you can install dlib with GPU support, making facial recognition faster and more efficient.

For this, I recommend installing dlib from source as you’ll have more control over the build:

$ workon <your env name here> # optional $ git clone https://github.com/davisking/dlib.git $ cd dlib $ mkdir build $ cd build $ cmake .. -DDLIB_USE_CUDA=1 -DUSE_AVX_INSTRUCTIONS=1 $ cmake --build . $ cd .. $ python setup.py install --yes USE_AVX_INSTRUCTIONS --yes DLIB_USE_CUDA

Install the face_recognition package

The face_recognition module is installable via a simple pip command:

$ workon <your env name here> # optional $ pip install face_recognition

Install imutils

You’ll also need my package of convenience functions, imutils. You may install it in your Python virtual environment via pip:

$ workon <your env name here> # optional $ pip install imutils

Our face recognition dataset

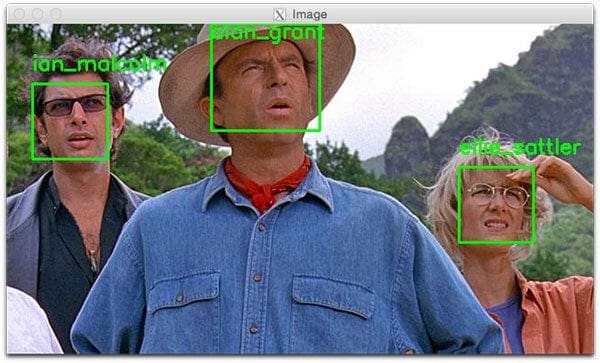

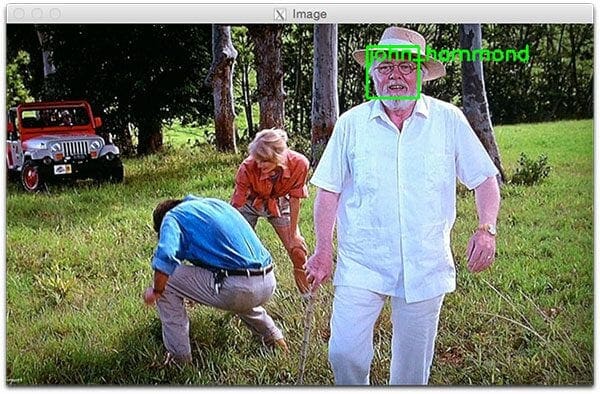

Since Jurassic Park (1993) is my favorite movie of all time, and in honor of Jurassic World: Fallen Kingdom (2018) being released this Friday in the U.S., we are going to apply face recognition to a sample of the characters in the films:

- Alan Grant, paleontologist (22 images)

- Claire Dearing, park operations manager (53 images)

- Ellie Sattler, paleobotanist (31 images)

- Ian Malcolm, mathematician (41 images)

- John Hammond, businessman/Jurassic Park owner (36 images)

- Owen Grady, dinosaur researcher (35 images)

This dataset was constructed in < 30 minutes using the method discussed in my How to (quickly) build a deep learning image dataset tutorial. Given this dataset of images we’ll:

- Create the 128-d embeddings for each face in the dataset

- Use these embeddings to recognize the faces of the characters in both images and video streams

Face recognition project structure

Our project structure can be seen by examining the output from the tree command:

$ tree --filelimit 10 --dirsfirst . ├── dataset │ ├── alan_grant [22 entries] │ ├── claire_dearing [53 entries] │ ├── ellie_sattler [31 entries] │ ├── ian_malcolm [41 entries] │ ├── john_hammond [36 entries] │ └── owen_grady [35 entries] ├── examples │ ├── example_01.png │ ├── example_02.png │ └── example_03.png ├── output │ └── lunch_scene_output.avi ├── videos │ └── lunch_scene.mp4 ├── search_bing_api.py ├── encode_faces.py ├── recognize_faces_image.py ├── recognize_faces_video.py ├── recognize_faces_video_file.py └── encodings.pickle 10 directories, 11 files

Our project has 4 top-level directories:

dataset/: Contains face images for six characters organized into subdirectories based on their respective names.examples/: Has three face images for testing that are not in the dataset.output/: This is where you can store your processed face recognition videos. I’m leaving one of mine in the folder — the classic “lunch scene” from the original Jurassic Park movie.videos/: Input videos should be stored in this folder. This folder also contains the “lunch scene” video but it hasn’t undergone our face recognition system yet.

We also have 6 files in the root directory:

search_bing_api.py: Step 1 is to build a dataset (I’ve already done this for you). To learn how to use the Bing API to build a dataset with my script, just see this blog post.encode_faces.py: Encodings (128-d vectors) for faces are built with this script.recognize_faces_image.py: Recognize faces in a single image (based on encodings from your dataset).recognize_faces_video.py: Recognize faces in a live video stream from your webcam and output a video.recognize_faces_video_file.py: Recognize faces in a video file residing on disk and output the processed video to disk. I won’t be discussing this file today as the bones are from the same skeleton as the video stream file.encodings.pickle: Facial recognition encodings are generated from your dataset viaencode_faces.pyand then serialized to disk.

After a dataset of images is created (with search_bing_api.py), we’ll run encode_faces.py to build the embeddings.

From there, we’ll run the recognize scripts to actually recognize the faces.

Encoding the faces using OpenCV and deep learning

face_recognition module method generates a 128-d real-valued number feature vector per face.Before we can recognize faces in images and videos, we first need to quantify the faces in our training set. Keep in mind that we are not actually training a network here — the network has already been trained to create 128-d embeddings on a dataset of ~3 million images.

We certainly could train a network from scratch or even fine-tune the weights of an existing model but that is more than likely overkill for many projects. Furthermore, you would need a lot of images to train the network from scratch.

Instead, it’s easier to use the pre-trained network and then use it to construct 128-d embeddings for each of the 218 faces in our dataset.

Then, during classification, we can use a simple k-NN model + votes to make the final face classification. Other traditional machine learning models can be used here as well.

To construct our face embeddings open up encode_faces.py from the “Downloads” associated with this blog post:

# import the necessary packages from imutils import paths import face_recognition import argparse import pickle import cv2 import os

First, we need to import required packages. Again, take note that this script requires imutils, face_recognition, and OpenCV installed. Scroll up to the “Install your face recognition libraries” to make sure you have the libraries ready to go on your system.

Let’s handle our command line arguments that are processed at runtime with argparse :

# construct the argument parser and parse the arguments

ap = argparse.ArgumentParser()

ap.add_argument("-i", "--dataset", required=True,

help="path to input directory of faces + images")

ap.add_argument("-e", "--encodings", required=True,

help="path to serialized db of facial encodings")

ap.add_argument("-d", "--detection-method", type=str, default="cnn",

help="face detection model to use: either `hog` or `cnn`")

args = vars(ap.parse_args())

If you’re new to PyImageSearch, let me direct your attention to the above code block which will become familiar to you as you read more of my blog posts. We’re using argparse to parse command line arguments. When you run a Python program in your command line, you can provide additional information to the script without leaving your terminal. Lines 10-17 do not need to be modified as they parse input coming from the terminal. Check out my blog post about command line arguments if these lines look unfamiliar.

Let’s list out the argument flags and discuss them:

--dataset: The path to our dataset (we created a dataset withsearch_bing_api.pydescribed in method #2 of last week’s blog post).--encodings: Our face encodings are written to the file that this argument points to.--detection-method: Before we can encode faces in images we first need to detect them. Or two face detection methods include eitherhogorcnn. Those two flags are the only ones that will work for--detection-method.

Now that we’ve defined our arguments, let’s grab the paths to the files in our dataset (as well as perform two initializations):

# grab the paths to the input images in our dataset

print("[INFO] quantifying faces...")

imagePaths = list(paths.list_images(args["dataset"]))

# initialize the list of known encodings and known names

knownEncodings = []

knownNames = []

Line 21 uses the path to our input dataset directory to build a list of all imagePaths contained therein.

We also need to initialize two lists before our loop, knownEncodings and knownNames , respectively. These two lists will contain the face encodings and corresponding names for each person in the dataset (Lines 24 and 25).

It’s time to begin looping over our Jurassic Park character faces!

# loop over the image paths

for (i, imagePath) in enumerate(imagePaths):

# extract the person name from the image path

print("[INFO] processing image {}/{}".format(i + 1,

len(imagePaths)))

name = imagePath.split(os.path.sep)[-2]

# load the input image and convert it from BGR (OpenCV ordering)

# to dlib ordering (RGB)

image = cv2.imread(imagePath)

rgb = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)

This loop will cycle 218 times corresponding to our 218 face images in the dataset. We’re looping over the paths to each of the images on Line 28.

From there, we’ll extract the name of the person from the imagePath (as our subdirectory is named appropriately) on Line 32.

Then let’s load the image while passing the imagePath to cv2.imread (Line 36).

OpenCV orders color channels in BGR, but the dlib actually expects RGB. The face_recognition module uses dlib, so before we proceed, let’s swap color spaces on Line 37, naming the new image rgb.

Next, let’s localize the face and compute encodings:

# detect the (x, y)-coordinates of the bounding boxes # corresponding to each face in the input image boxes = face_recognition.face_locations(rgb, model=args["detection_method"]) # compute the facial embedding for the face encodings = face_recognition.face_encodings(rgb, boxes) # loop over the encodings for encoding in encodings: # add each encoding + name to our set of known names and # encodings knownEncodings.append(encoding) knownNames.append(name)

This is the fun part of the script!

For each iteration of the loop, we’re going to detect a face (or possibly multiple faces and assume that it is the same person in multiple locations of the image — this assumption may or may not hold true in your own images so be careful here).

For example, let’s say that rgb contains a picture (or pictures) of Ellie Sattler’s face.

Lines 41 and 42 actually find/localize the faces of her resulting in a list of face boxes. We pass two parameters to the face_recognition.face_locations method:

rgb: Our RGB image.model: Eithercnnorhog(this value is contained within our command line arguments dictionary associated with the"detection_method"key). The CNN method is more accurate but slower. HOG is faster but less accurate.

Then, we’re going to turn the bounding boxes of Ellie Sattler’s face into a list of 128 numbers on Line 45. This is known as encoding the face into a vector and the face_recognition.face_encodings method handles it for us.

From there we just need to append the Ellie Sattler encoding and name to the appropriate list (knownEncodings and knownNames).

We’ll continue to do this for all 218 images in the dataset.

What would be the point of encoding the images unless we could use the encodings in another script which handles the recognition?

Let’s take care of that now:

# dump the facial encodings + names to disk

print("[INFO] serializing encodings...")

data = {"encodings": knownEncodings, "names": knownNames}

f = open(args["encodings"], "wb")

f.write(pickle.dumps(data))

f.close()

Line 56 constructs a dictionary with two keys — "encodings" and "names".

From there Lines 57-59 dump the names and encodings to disk for future recall.

How should I run the encode_faces.py script in the terminal?

To create our facial embeddings open up a terminal and execute the following command:

$ python encode_faces.py --dataset dataset --encodings encodings.pickle [INFO] quantifying faces... [INFO] processing image 1/218 [INFO] processing image 2/218 [INFO] processing image 3/218 ... [INFO] processing image 216/218 [INFO] processing image 217/218 [INFO] processing image 218/218 [INFO] serializing encodings... $ ls -lh encodings* -rw-r--r--@ 1 adrian staff 234K May 29 13:03 encodings.pickle

As you can see from our output, we now have a file named encodings.pickle — this file contains the 128-d face embeddings for each face in our dataset.

On my Titan X GPU, processing the entire dataset took a little over a minute, but if you’re using a CPU, be prepared to wait awhile for this script to complete!

On my Macbook Pro (no GPU), encoding 218 images required 21min 20sec.

You should expect much faster speeds if you have a GPU and compiled dlib with GPU support.

Recognizing faces in images

face_recognition Python module.Now that we have created our 128-d face embeddings for each image in our dataset, we are now ready to recognize faces in image using OpenCV, Python, and deep learning.

Open up recognize_faces_image.py and insert the following code (or better yet, grab the files and image data associated with this blog post from the “Downloads” section found at the bottom of this post, and follow along):

# import the necessary packages

import face_recognition

import argparse

import pickle

import cv2

# construct the argument parser and parse the arguments

ap = argparse.ArgumentParser()

ap.add_argument("-e", "--encodings", required=True,

help="path to serialized db of facial encodings")

ap.add_argument("-i", "--image", required=True,

help="path to input image")

ap.add_argument("-d", "--detection-method", type=str, default="cnn",

help="face detection model to use: either `hog` or `cnn`")

args = vars(ap.parse_args())

This script requires just four imports on Lines 2-5. The face_recognition module will do the heavy lifting and OpenCV will help us to load, convert, and display the processed image.

We’ll parse three command line arguments on Lines 8-15:

--encodings: The path to the pickle file containing our face encodings.--image: This is the image that is undergoing facial recognition.--detection-method: You should be familiar with this one by now — we’re either going to use ahogorcnnmethod depending on the capability of your system. For speed, choosehogand for accuracy, choosecnn.

IMPORTANT! If you are:

- Running the face recognition code on a CPU

- OR you using a Raspberry Pi

- …you’ll want to set the

--detection-methodtohogas the CNN face detector is (1) slow without a GPU and (2) the Raspberry Pi won’t have enough memory to run the CNN either.

From there, let’s load the pre-computed encodings + face names and then construct the 128-d face encoding for the input image:

# load the known faces and embeddings

print("[INFO] loading encodings...")

data = pickle.loads(open(args["encodings"], "rb").read())

# load the input image and convert it from BGR to RGB

image = cv2.imread(args["image"])

rgb = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)

# detect the (x, y)-coordinates of the bounding boxes corresponding

# to each face in the input image, then compute the facial embeddings

# for each face

print("[INFO] recognizing faces...")

boxes = face_recognition.face_locations(rgb,

model=args["detection_method"])

encodings = face_recognition.face_encodings(rgb, boxes)

# initialize the list of names for each face detected

names = []

Line 19 loads our pickled encodings and face names from disk. We’ll need this data later during the actual face recognition step.

Then, on Lines 22 and 23 we load and convert the input image to rgb color channel ordering (just as we did in the encode_faces.py script).

We then proceed to detect all faces in the input image and compute their 128-d encodings on Lines 29-31 (these lines should also look familiar).

Now is a good time to initialize a list of names for each face that is detected — this list will be populated in the next step.

Next, let’s loop over the facial encodings:

# loop over the facial embeddings for encoding in encodings: # attempt to match each face in the input image to our known # encodings matches = face_recognition.compare_faces(data["encodings"], encoding) name = "Unknown"

On Line 37, we begin to loop over the face encodings computed from our input image.

Then the facial recognition magic happens!

We attempt to match each face in the input image (encoding) to our known encodings dataset (held in data["encodings"]) using face_recognition.compare_faces (Lines 40 and 41).

This function returns a list of True /False values, one for each image in our dataset. For our Jurassic Park example, there are 218 images in the dataset and therefore the returned list will have 218 boolean values.

Internally, the compare_faces function is computing the Euclidean distance between the candidate embedding and all faces in our dataset:

- If the distance is below some tolerance (the smaller the tolerance, the more strict our facial recognition system will be) then we return

True, indicating the faces match. - Otherwise, if the distance is above the tolerance threshold we return

Falseas the faces do not match.

Essentially, we are utilizing a “more fancy” k-NN model for classification. Be sure to refer to the compare_faces implementation for more details.

The name variable will eventually hold the name string of the person — for now, we leave it as "Unknown" in case there are no “votes” (Line 42).

Given our matches list we can compute the number of “votes” for each name (number of True values associated with each name), tally up the votes, and select the person’s name with the most corresponding votes:

# check to see if we have found a match

if True in matches:

# find the indexes of all matched faces then initialize a

# dictionary to count the total number of times each face

# was matched

matchedIdxs = [i for (i, b) in enumerate(matches) if b]

counts = {}

# loop over the matched indexes and maintain a count for

# each recognized face face

for i in matchedIdxs:

name = data["names"][i]

counts[name] = counts.get(name, 0) + 1

# determine the recognized face with the largest number of

# votes (note: in the event of an unlikely tie Python will

# select first entry in the dictionary)

name = max(counts, key=counts.get)

# update the list of names

names.append(name)

If there are any True votes in matches (Line 45) we need to determine the indexes of where these True values are in matches. We do just that on Line 49 where we construct a simple list of matchedIdxs which might look like this for example_01.png:

(Pdb) matchedIdxs [35, 36, 37, 38, 39, 40, 41, 42, 43, 44, 45, 46, 47, 48, 49, 50, 51, 52, 53, 54, 55, 56, 57, 58, 59, 60, 61, 62, 63, 64, 65, 66, 67, 68, 69, 71, 72, 73, 74, 75]

We then initialize a dictionary called counts which will hold the character name as the key and the number of votes as the value (Line 50).

From there, let’s loop over the matchedIdxs and set the value associated with each name while incrementing it as necessary in counts . The counts dictionary might look like this for a high vote score for Ian Malcolm:

(Pdb) counts

{'ian_malcolm': 40}

Recall that we only have 41 pictures of Ian in the dataset, so a score of 40 with no votes for anybody else is extremely high.

Line 61 extracts the name with the most votes from counts , in this case, it would be 'ian_malcolm'.

The second iteration of our loop (as there are two faces in our example image) of the main facial encodings loop yields the following for counts:

(Pdb) counts

{'alan_grant': 5}

That is definitely a smaller vote score, but still, there is only one name in the dictionary so we likely have found Alan Grant.

Note: The PDB Python Debugger was used to verify values of the counts dictionary. PDB usage is outside the scope of this blog post; however, you can discover how to use it on the Python docs page.

As shown in Figure 5 below, both Ian Malcolm and Alan Grant have been correctly recognized, so this part of the script is working well.

Let’s move on and loop over the bounding boxes and labeled names for each person and draw them on our output image for visualization purposes:

# loop over the recognized faces

for ((top, right, bottom, left), name) in zip(boxes, names):

# draw the predicted face name on the image

cv2.rectangle(image, (left, top), (right, bottom), (0, 255, 0), 2)

y = top - 15 if top - 15 > 15 else top + 15

cv2.putText(image, name, (left, y), cv2.FONT_HERSHEY_SIMPLEX,

0.75, (0, 255, 0), 2)

# show the output image

cv2.imshow("Image", image)

cv2.waitKey(0)

On Line 67, we begin looping over the detected face bounding boxes and predicted names. To create an iterable object so we can easily loop through the values, we call zip(boxes, names) resulting in tuples that we can extract the box coordinates and name from.

We use the box coordinates to draw a green rectangle on Line 69.

We also use the coordinates to calculate where we should draw the text for the person’s name (Line 70) followed by actually placing the name text on the image (Lines 71 and 72). If the face bounding box is at the very top of the image, we need to move the text below the top of the box (handled on Line 70), otherwise, the text would be cut off.

We then proceed to display the image until a key is pressed (Lines 75 and 76).

How should you run the facial recognition Python script?

Using your terminal, first ensure you’re in your respective Python correct virtual environment with the workon command (if you are using a virtual environment, of course).

Then run the script while providing the two command line arguments at a minimum. If you choose to use the HoG method, be sure to pass --detection-method hog as well (otherwise it will default to the deep learning detector).

Let’s go for it!

To recognize a face using OpenCV and Python open up your terminal and execute our script:

$ python recognize_faces_image.py --encodings encodings.pickle \ --image examples/example_01.png [INFO] loading encodings... [INFO] recognizing faces...

A second face recognition example follows:

$ python recognize_faces_image.py --encodings encodings.pickle \ --image examples/example_03.png [INFO] loading encodings... [INFO] recognizing faces...

Recognizing faces in video

Now that we have applied face recognition to images let’s also apply face recognition to videos (in real-time) as well.

Important Performance Note: The CNN face recognizer should only be used in real-time if you are working with a GPU (you can use it with a CPU, but expect less than 0.5 FPS which makes for a choppy video). Alternatively (you are using a CPU), you should use the HoG method (or even OpenCV Haar cascades covered in a future blog post) and expect adequate speeds.

The following script draws many parallels from the previous recognize_faces_image.py script. Therefore I’ll be breezing past what we’ve already covered and just review the video components so that you understand what is going on.

Once you’ve grabbed the “Downloads”, open up recognize_faces_video.py and follow along:

# import the necessary packages

from imutils.video import VideoStream

import face_recognition

import argparse

import imutils

import pickle

import time

import cv2

# construct the argument parser and parse the arguments

ap = argparse.ArgumentParser()

ap.add_argument("-e", "--encodings", required=True,

help="path to serialized db of facial encodings")

ap.add_argument("-o", "--output", type=str,

help="path to output video")

ap.add_argument("-y", "--display", type=int, default=1,

help="whether or not to display output frame to screen")

ap.add_argument("-d", "--detection-method", type=str, default="cnn",

help="face detection model to use: either `hog` or `cnn`")

args = vars(ap.parse_args())

We import packages on Lines 2-8 and then proceed to parse our command line arguments on Lines 11-20.

We have four command line arguments, two of which you should recognize from above (--encodings and --detection-method). The other two arguments are:

--output: The path to the output video.--display: A flag which instructs the script to display the frame to the screen. A value of1displays and a value of0will not display the output frames to our screen.

From there we’ll load our encodings and start our VideoStream:

# load the known faces and embeddings

print("[INFO] loading encodings...")

data = pickle.loads(open(args["encodings"], "rb").read())

# initialize the video stream and pointer to output video file, then

# allow the camera sensor to warm up

print("[INFO] starting video stream...")

vs = VideoStream(src=0).start()

writer = None

time.sleep(2.0)

To access our camera we’re using the VideoStream class from imutils. Line 29 starts the stream. If you have multiple cameras on your system (such as a built-in webcam and an external USB cam), you can change the src=0 to src=1 and so forth.

We’ll be optionally writing processed video frames to disk later, so we initialize writer to None (Line 30). Sleeping for 2 complete seconds allows our camera to warm up (Line 31).

From there we’ll start a while loop and begin to grab and process frames:

# loop over frames from the video file stream while True: # grab the frame from the threaded video stream frame = vs.read() # convert the input frame from BGR to RGB then resize it to have # a width of 750px (to speedup processing) rgb = cv2.cvtColor(frame, cv2.COLOR_BGR2RGB) rgb = imutils.resize(frame, width=750) r = frame.shape[1] / float(rgb.shape[1]) # detect the (x, y)-coordinates of the bounding boxes # corresponding to each face in the input frame, then compute # the facial embeddings for each face boxes = face_recognition.face_locations(rgb, model=args["detection_method"]) encodings = face_recognition.face_encodings(rgb, boxes) names = []

Our loop begins on Line 34 and the first step we take is to grab a frame from the video stream (Line 36).

The remaining Lines 40-50 in the above code block are nearly identical to the lines in the previous script with the exception being that this is a video frame and not a static image. Essentially we read the frame, preprocess, and then detect face bounding boxes + calculate encodings for each bounding box.

Next, let’s loop over the facial encodings associated with the faces we have just found:

# loop over the facial embeddings

for encoding in encodings:

# attempt to match each face in the input image to our known

# encodings

matches = face_recognition.compare_faces(data["encodings"],

encoding)

name = "Unknown"

# check to see if we have found a match

if True in matches:

# find the indexes of all matched faces then initialize a

# dictionary to count the total number of times each face

# was matched

matchedIdxs = [i for (i, b) in enumerate(matches) if b]

counts = {}

# loop over the matched indexes and maintain a count for

# each recognized face face

for i in matchedIdxs:

name = data["names"][i]

counts[name] = counts.get(name, 0) + 1

# determine the recognized face with the largest number

# of votes (note: in the event of an unlikely tie Python

# will select first entry in the dictionary)

name = max(counts, key=counts.get)

# update the list of names

names.append(name)

In this code block, we loop over each of the encodings and attempt to match the face. If there are matches found, we count the votes for each name in the dataset. We then extract the highest vote count and that is the name associated with the face. These lines are identical to the previous script we reviewed, so let’s move on.

In this next block, we loop over the recognized faces and proceed to draw a box around the face and the display name of the person above the face:

# loop over the recognized faces for ((top, right, bottom, left), name) in zip(boxes, names): # rescale the face coordinates top = int(top * r) right = int(right * r) bottom = int(bottom * r) left = int(left * r) # draw the predicted face name on the image cv2.rectangle(frame, (left, top), (right, bottom), (0, 255, 0), 2) y = top - 15 if top - 15 > 15 else top + 15 cv2.putText(frame, name, (left, y), cv2.FONT_HERSHEY_SIMPLEX, 0.75, (0, 255, 0), 2)

Those lines are identical too, so let’s focus on the video-related code.

Optionally, we’re going to write the frame to disk, so let’s see how writing video to disk with OpenCV works:

# if the video writer is None *AND* we are supposed to write # the output video to disk initialize the writer if writer is None and args["output"] is not None: fourcc = cv2.VideoWriter_fourcc(*"MJPG") writer = cv2.VideoWriter(args["output"], fourcc, 20, (frame.shape[1], frame.shape[0]), True) # if the writer is not None, write the frame with recognized # faces to disk if writer is not None: writer.write(frame)

Assuming we have an output file path provided in the command line arguments and we haven’t already initialized a video writer (Line 99), let’s go ahead and initialize it.

On Line 100, we initialize the VideoWriter_fourcc. FourCC is a 4-character code and in our case, we’re going to use the “MJPG” 4-character code.

From there, we’ll pass that object into the VideoWriter along with our output file path, frames per second target, and frame dimensions (Lines 101 and 102).

Finally, if the writer exists, we can go ahead and write a frame to disk (Lines 106 and 107).

Let’s handle whether or not we should display the face recognition video frames on the screen:

# check to see if we are supposed to display the output frame to

# the screen

if args["display"] > 0:

cv2.imshow("Frame", frame)

key = cv2.waitKey(1) & 0xFF

# if the `q` key was pressed, break from the loop

if key == ord("q"):

break

If our display command line argument is set, we go ahead and display the frame (Line 112) and check if the quit key ("q") has been pressed (Lines 113-116), at which point we’d break out of the loop (Line 117).

Lastly, let’s perform our housekeeping duties:

# do a bit of cleanup cv2.destroyAllWindows() vs.stop() # check to see if the video writer point needs to be released if writer is not None: writer.release()

In Lines 120-125, we clean up and release the display, video stream, and video writer.

Are you ready to see the script in action?

To demonstrate real-time face recognition with OpenCV and Python in action, open up a terminal and execute the following command:

$ python recognize_faces_video.py --encodings encodings.pickle \ --output output/webcam_face_recognition_output.avi --display 1 [INFO] loading encodings... [INFO] starting video stream...

Below you can find an output example video that I recorded demonstrating the face recognition system in action:

Face recognition in video files

As I mentioned in our “Face recognition project structure” section, there’s an additional script included in the “Downloads” for this blog post — recognize_faces_video_file.py.

This file is essentially the same as the one we just reviewed for the webcam except it will take an input video file and generate an output video file if you’d like.

I applied our face recognition code to the popular “lunch scene” from the original Jurassic Park movie where the cast is sitting around a table sharing their concerns with the park:

$ python recognize_faces_video_file.py --encodings encodings.pickle \ --input videos/lunch_scene.mp4 --output output/lunch_scene_output.avi \ --display 0

Here’s the result:

Note: Recall that our model was trained on four members of the original cast: Alan Grant, Ellie Sattler, Ian Malcolm, and John Hammond. The model was not trained on Donald Gennaro (the lawyer) which is why his face is labeled as “Unknown”. This behavior was by design (not an accident) to show that our face recognition system can recognize faces it was trained on while leaving faces it cannot recognize as “Unknown”.

And in the following video I have put together a “highlight reel” of Jurassic Park and Jurassic World clips, mainly from the trailers:

As we can see, our face recognition and OpenCV code works quite well!

Can I use this face recognizer code on the Raspberry Pi?

Kinda, sorta. There are a few limitations though:

- The Raspberry Pi does not have enough memory to utilize the more accurate CNN-based face detector…

- …so we are limited to HOG instead

- Except that HOG is far too slow on the Pi for real-time face detection…

- …so we need to utilize OpenCV’s Haar cascades

And once you get it running you can expect only 1-2 FPS, and even reaching that level of FPS takes a few tricks.

The good news is that I’ll be back next week to discuss how to run our face recognizer on the Raspberry Pi, so stay tuned!

Alternative face recognition methods

The face recognition method we used inside this tutorial was based on a combination of Davis King’s dlib library and Adam Geitgey’s face_recognition module.

Davis has provided a ResNet-based siamese network that is super useful for face recognition tasks. Adam’s library provides a wrapper around dlib to make the face recognition functionality easier to use.

However, there are other face recognition methods that can be used, including both deep learning-based and traditional computer vision-based approaches.

To start, take a look at this tutorial on OpenCV Face Recognition which is a pure OpenCV-based face recognizer (i.e., no other libraries, such as dlib, scikit-image, etc., are required to perform face recognition). That said, dlib’s face recognizer does tend to be a bit more accurate, so keep that in mind when implementing face recognition models of your own.

For non-deep learning-based face recognition, I suggest taking a look at both Eigenfaces and Local Binary Patterns (LBPs) for face recognition:

These methods are less accurate than their deep learning-based face recognition counterparts, but tend to be much more computationally efficient and will run faster on embedded systems.

Face Recognition Application ideas

What can’t you build with face recognition? Seriously, it’s so useful to be able to recognize a person’s face.

Here are just a few ideas of what you can build

- Security

- Account logon

- Marketing applications

- Sales workflow

- Social media engagement meter

- Healthcare patient ID

- Voting ID

- Education safety and notification to parents and teachers

- Gaming ID and age determination to avoid collecting data on minors

- Library card replacement

What's next? We recommend PyImageSearch University.

86+ total classes • 115+ hours hours of on-demand code walkthrough videos • Last updated: April 2026

★★★★★ 4.84 (128 Ratings) • 16,000+ Students Enrolled

I strongly believe that if you had the right teacher you could master computer vision and deep learning.

Do you think learning computer vision and deep learning has to be time-consuming, overwhelming, and complicated? Or has to involve complex mathematics and equations? Or requires a degree in computer science?

That’s not the case.

All you need to master computer vision and deep learning is for someone to explain things to you in simple, intuitive terms. And that’s exactly what I do. My mission is to change education and how complex Artificial Intelligence topics are taught.

If you're serious about learning computer vision, your next stop should be PyImageSearch University, the most comprehensive computer vision, deep learning, and OpenCV course online today. Here you’ll learn how to successfully and confidently apply computer vision to your work, research, and projects. Join me in computer vision mastery.

Inside PyImageSearch University you'll find:

- ✓ 86+ courses on essential computer vision, deep learning, and OpenCV topics

- ✓ 86 Certificates of Completion

- ✓ 115+ hours hours of on-demand video

- ✓ Brand new courses released regularly, ensuring you can keep up with state-of-the-art techniques

- ✓ Pre-configured Jupyter Notebooks in Google Colab

- ✓ Run all code examples in your web browser — works on Windows, macOS, and Linux (no dev environment configuration required!)

- ✓ Access to centralized code repos for all 540+ tutorials on PyImageSearch

- ✓ Easy one-click downloads for code, datasets, pre-trained models, etc.

- ✓ Access on mobile, laptop, desktop, etc.

Summary

In this tutorial, you learned how to perform face recognition with OpenCV, Python, and deep learning.

Additionally, we made use of Davis King’s dlib library and Adam Geitgey’s face_recognition module which wraps around dlib’s deep metric learning, making facial recognition easier to accomplish.

As we found out, our face recognition implementation is both:

- Accurate

- Capable of being executed in real-time with a GPU

I hope you enjoyed today’s blog post on face recognition!

To download the source code to this post, and be notified when future tutorials are published here on PyImageSearch, just enter your email address in the form below!

Download the Source Code and FREE 17-page Resource Guide

Enter your email address below to get a .zip of the code and a FREE 17-page Resource Guide on Computer Vision, OpenCV, and Deep Learning. Inside you'll find my hand-picked tutorials, books, courses, and libraries to help you master CV and DL!

Hi Adrian,

Can we achieve the same in tensorflow framework? Is it possible to use dlib landmark and feature extractor models in tensorflow? I tried loading the model in tf session but it ofcourse failed during parsing.

Thanks!

You’re asking a few different questions here, so let me try to take apart the question and ask:

1. We are using dlib’s pre-trained deep metric facial embedding network to extract the 128-d feature vectors used to quantify each face.

2. Facial landmarks aren’t actually covered in this post. Maybe you’re referring to this one?

3. These models are not directly compatible with TensorFlow. You need dlib to use them out of the box.

So, basically, we can’t export this work to be used with the Intel Movidius stick, right?

No, not directly. You could use the same algorithms and techniques but you would need to train a Caffe or TensorFlow model that is compatible with the Movidius. That said, this method can run on the Pi (I’ll be sharing a blog post on it next week).

Thanks Adrian, will be waiting for your blog post.

How can I train a Caffee or TensorFlow model using the same techniques? Completely new to this space and stuck. I want to run face recognition model on intel movidius stick

I’ll actually be covering face recognition on the Movidius NCS in my upcoming book, stay tuned!

Hey @Adrian_Rosebrock kudos for this amazing work, really detailed and indepth explanation of every line well done. I have a question, if i want to use ip cam as a camera stream for python_video.py code, how do i do it? Much thanks

You’ll want to pass in the URL, query string parameters, etc. as the “src” to

VideoStream. Also take a look at the “cv2.VideoCapture” function as that is the underlying OpenCV method that “VideoStream” will utilize.hi i am getting this error: usage: encode_faces.py [-h] -i DATASET -e ENCODINGS [-d DETECTION_METHOD]

encode_faces.py: error: the following arguments are required: -i/–dataset, -e/–encodings. i am using python.3.5

Read up on command line arguments and you’ll be up and running in no time!

i tried the command line arguments as said in the blog, but Adrian , i am unable to correct the error , it will be kind of u to post a video on how to correct the error, i really want to learn it from an expert like u

I would kindly suggest you go back and read the argparse + command line arguments tutorial again. Command line arguments are a basic concept, one that you will often see in any computer science field. Take the time now to invest in your knowledge here. Walk before you run.

Hi Adrian,

This is indeed a great work. Learnt so much from your post.

I was wondering if you could help me out in finding the people in live webcam feed with reference data in place.

What do you mean by “reference data”?

how do we apply face recognition to realtime video stream from webcam. reference data is the database of images of people on whom we want to apply this face recognition.

That’s a really good job there Adrian.

I always love seeing new posts here and there’s always something new in deep learning I learn from you.

Thanks for your time and effort towards the community.

Thank you Sushant, I really appreciate that 🙂

Adrian, you should think about offering free courses in Coursera or edx(if you are not already doing it)

Keep up the good work

I’ve considered it but I really don’t think it’s going to happen. I prefer self-publishing my own content and having a better relationship with readers/students. I also really do not like how Udacity and the like treat their content creators. I don’t think it’s a very good relationship, and yes, while I could theoretically reach a larger audience, I don’t think it’s worth losing the more personal relationships here on the blog. Relationships and being able to communicate with others on a meaningful level is not something I would ever sacrifice.

Thank you for the wonderful post! Always wait for your post to learn new things

Thanks Ritika! 🙂

Sir,

Thanks a lot for this great tutorial. I have a question, in the encode_faces.py code we have a detection method flag which is by default cnn. I could not understand the statement ” Instead, it’s easier to use the pre-trained network and then use it to construct 128-d embeddings for each of the 218 faces in our dataset”. Where is the pretrained network or its weights?

I checked the GitHub source of face_recognition , I could only find the author telling that the network was trained on dlib using deep learning but could not find the Deep learning network used to train the network in the code repository. It seems that by calling the flag cnn I am actually getting access to the face recognition algorithm’s weight but could not understand how. In fact , I am not able to connect this blog post with the dl4cv practitioner bundle lesson three or five(I have reached only till this.).

This is way ahead of my knowledge and so sorry for asking a foolish question.

Anirban Ghosh

There are two networks if you use the “CNN” for face detection:

1. One CNN is used to detect faces in an image. You could use HOG + Linear SVM or a Haar cascade here.

2. The other CNN is the deep metric CNN. It takes an input face and computes the 128-d quantification of the face.

Thanks, Sir, your tutorials are just so great. It is my lack of knowledge that I have difficulty in understanding.

Rgds,

Anirban Ghosh

Thanks for this toturial

I want to simplify all of these steps for the user so that they can easily create different people to identify the face.for example i using raspberry pi…

For example the user can easily give different images to the system and identify the system of the individual(from another computer to raspberry pi)

Can you clarify what “simplify” means in this context? Are you talking about building a specific user interface or set of tools to facilitate face recognition on the Pi? Are you talking about reducing the computational complexity of this method such that it can run on the Pi?

Please answer me,please

Im your advocate

Nice tutorial! I Implemented basically the same pipeline with hypersphere embedding (https://arxiv.org/abs/1704.08063), but despite the promises, it works poorly on real data. The lighting conditions and the shadows seem to split my clusters even for intuitively easy cases. What are your experiences with dlib?

I’ve had wonderful experiences with dlib. The library is easy to use and well documented. Davis King, the creator, has done an incredible job with the library.

Dlib uses the facenet architecture, inspired by the openface implementation, as far I know. There is an embedding vs embedding competition in my eyes, I don’t care about the library.

Compared to OpenFace I’ve found dlib to be substantially easier to use and just as accurate. Between the two I would opt for dlib but that’s just my opinion.

Sir,

I kept on looking finally found that the dlib library is actually trained on a DL network. It is written in C++ and since I am also studying C++ on the side, this is something I understand(at least superfluously), now it is clear as to why we can just pass in the image for detecting the face in the image in encoding_image.py, as because the dlib library is trained on 3 million plus images and here we just used its weights for finding the faces in the images and appended the name to the appropriate 128-D vector embedding that was created. Need to learn more before I can have a complete grasp of this subject , any way great explanation, I had seen this GitHub repo of Adam Gitgey before but could not understand than how to use it. Today , I at least understand it a bit and it looks the concept is pretty similar to word embeddings used in NLP. Thanks anyways. for the nice tutorials.

Regards,

Anirban Ghosh.

Your understand is correct, Anirban. We must first detect the face in the image (using a deep learning based detector, HOG + Linear SVM detector, Haar cascade detector, etc.) and then pass the detected face region into the deep learning embedding network provided by dlib. This network then produces the 128-d embedding of the face.

Hi Adrian,

Thanks for explaining the Face recognition Technology with different combinations of algo.

Would it be possible for you to provide some more details on “128-d embedding of the face”.

I understand that the network calculate this , but I am curious to know taking what features of face is this calculated.

Does it is calculated like distance between nose to eyes , nose to lips , eyes to eyes etc.?

I want to understand what points / features exactly taken and how it becomes 128 vector only…..

Take a look at the original articles by Davis King and Adam Geitgey that I linked to in the “Understanding deep learning face recognition embeddings” section. Adam’s article in particular goes more into the details on the embedding.

Hi Adrian,

Thanks for guiding.

But the above article also didn’t go into details , what features are these embeddings signifies.

Is there any other tutorial or link you can guide me to

OR if you can give information on the features these embedding signifies.

I would suggest you read the FaceNet paper as well as the DeepFace paper.

Yes i mean is user interface application for interacting raspberry pi server..

I

There are a few ways to accomplish this, but if you’re talking strictly about building a user interface that doesn’t really have a whole lot to do with computer vision or deep learning (I’m also certainly not a GUI expert). If you’re interested in building user interfaces take a look at libraries such as Tkinter, Qt, and Kivy. From there you’ll want to take a look at different mechanisms to pass data back and forth between the client and server. A message massing library like ZeroMQ or RabbitMQ would be a good option.

Please use my pyfacy python package for Face Recognition with user friendly.

Refer link:

https://medium.com/@manivannan_data/pyfacy-face-recognition-and-face-clustering-8d467cba36de

Great tutorial Adrian.

I am anxiously waiting for your post every Monday.

Although, it would be very nice of you if you could show us how to train a Face recognition system from the scratch using a standard detection model (Yolo, MobileNet, SqueezeNet etc.) specifically build for low power single board computers i.e., Raspberry Pi using Keras and Tensorflow or Digits+Caffe etc.

I really appreciate your effort and time that you put into organizing these tutorials.

Thanks again!

I’ll be doing a face recognition + Raspberry Pi tutorial next week 😉

Nice post Adrian. I’m getting some error $ python encode_faces.py –dataset dataset –encodings encodings.pickle

Illegal instruction (core dumped)

How can I fix this?

It sounds like one of the libraries is causing a segfault of some sort. Try inserting “print” statements or using “pdb” to find the line that is causing the issue.

it is the problem with dlib library, I tried building with AVX_INSTRUCTIONS= 0, I was able to run a program but the process is to slow and laggy, my computer got frozen, I think it’s not running with GPU.

Your computer likely isn’t frozen, it’s just taking awhile to detect the faces. Try using the HOG + Linear SVM face detector rather than the CNN detector.

What changes should I do if I use HOG SVM method? I never used those before

Please refer to the post as I discuss the difference between the two methods. You change your

--detection-methodfromcnntohog. Again, refer to the post.Hey Adrian,

Thanks for the detailed explanations. I ran the above code on my laptop and it appears very slow, the webcam stream is almost frozen. The compare method will compare each detected face with all the encodings, that will a lot of time for each frame i think. Please let me know your thoughts for the same.

Thanks

It’s actually not the embedding and comparison that is taking most of the time, it’s detecting the face itself via CNN face detector. Try using HOG + Linear SVM by switching the

--detection-methodcommand line argument tohog. Additionally, you could use Haar cascades as well.What we need to do to use harcascade in instead of svm

Then you would need to manually update the code to use OpenCV’s pre-trained Haar cascade.

I can’t wait to get home to “play” with this information from your post. Your blog, and the Practical Python and OpenCV system i purchased are really helping me become educated in this field!

Thanks Bruce, I really appreciate that! Enjoy hacking with the code and always feel free to reach out if you have any questions.

The encode_face.py took its time, The issue is coming up when i run the recognise_face_video.py. The Frames are frozen. I am using a i5 with 8gb ram. What should be hardware specifications for a decent real time face recognition sytem?

Hey there Devarshi, make sure you read my reply to your original question. I have already answered it for you. The frame is not “frozen”, it’s that the CNN face detector is taking a long time to run on your CPU. Change the

--detection-methodtohogand it will run on your CPU.If you would like to use the more accurate CNN face detector rather than HOG + Linear SVM or Haar cascades you should have a GPU.

Got It! I misunderstood the previous comment. Thank you for correction. You are the best!

Thank you for this great tutarial.

Which one of face recognition architectures you used in this tutarial??facenet or deepface or other one??

I used the model discussed in the dlib library.

Thanks Adrian for all you time and effort . Very cool face recognition example !!!!

Thanks Steve!

Hello dear adrian…

excellent??

You are wonderful

Grade 1?

Thank you for the kind words 🙂

I am getting MemoryError: bad allocation when I am using detection-method=’cnn’.My laptop configuration is i7 processor with 8gb ram and 4GB graphics card

It is working fine with hog. Can you please suggest how can i use CNN face detector with such configuration

It looks like your machine is running out of memory. Can you confirm whether the error is for your RAM or for your GPU memory?

Error is for my RAM

That’s odd that you would be running out of physical RAM. 8GB should be sufficient. Are you using the exact same code + dataset I am using in the blog post?

I have the same error however I was able to solve it, for images only, by using the full path to the image and reducing the image size to a 448×320 75kb jpg.

I noticed that cnn takes a long time and appears to only be using one thread.I also do not notice any activitiy on the GPU (Win10 Task Manager GPU).

I tried face_detection_cli.py (face Reconition Site Packages) to test Multithreaded CPU with CNN on the original sized example_01.png and it worked with no memory error and appeared to be using multiple CPU threads.

I am unsure if the Memory Error: Bad Allocation is simple as my GPU only has 4gb dedicated memory and/or whether the integrated Intel HD Graphics 4600 is causing a problem with the Nvidia GTX 860M in my laptop.

Win 10 x64, 16GB, Intel HD 4600 + GTX 860M

I received this error also – I just needed to put the full path name in for the input image file and it worked fine.

I am still getting the MemoryError: bad allocation when running recognize_faces_video_file.py however and using full path name is not fixing that…

i7, 16gb, Win 10 x64, Geforce 860M 4gb

Dlib Use Cuda and Use AVX selected

Your GPU itself is likely running out of memory as it cannot hold both (1) the face detector and (2) the encoding model. I would suggest using the CPU + HOG method for face detection.

So I bit the bullet and managed to successfully follow your wonderful guide ‘Setting up Ubuntu 16.04 + CUDA + GPU for deep learning with Python’

On the very same laptop I now can run all using ‘cnn’ and Nvidia-smi (average) when running recognize_faces_video_file.py (with –display 1) shows;

GPU Util = 87%

GPU Mem usage Python = 697MiB

GPU Mem usage ‘other’ total = 400MiB

Therefore in conclusion for me Windows kinda worked but Ubuntu the way forward!

None of this would have been possible for me without your tutorials Adrian – thank you

NB Took me about 5 attempts to get Ubuntu up and running (and I can still dual boot to Windows 10 if needed)

Nice job Matt! 🙂

Great tutorial, clearly explained and easy to follow.

Thanks so much Simon! 😀

Does this algorithm work better than inception v3 model for image classification?

They are two totally different techniques used for two totally different applications. The Inception network is used for (typically) image classification. The network here is used to compute a 128-d quantification of a face. You can’t compare them or say which one is better, it would be like comparing apples and oranges.

I got an error on 2nd image

…

rgb = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)

(-215) scn == 3 || scn == 4 in function cvtColor

Double-check your command line arguments. I believe the path to your input

--imagepath is incorrect. If it the path does not exist, thecv2.imreadfunction will return “None”.Do you solve this error?

Could you tell me how to solve it?

Hi Adrian

i test this with dlib without gpu and i was getting low fps,so i installed dlib with gpu and again low fps so how to use hog or other for better fps.Sorry for asking i am beginer.

Hey Kubet — what type of GPU are you using? Can you confirm that dlib is actually accessing your GPU?

Hi Adrian,

Thank you for the great posts as always.

I tried with HOG for face detection and it is still significantly slow compared to the other two face recognition programs you posted. However, I believe this is the most accurate one among the three approaches (Please correct me if I am wrong).

May I know how I can check whether dlib is accessing GPU or not?

Also, I am wondering if it is possible to use Movidius Neural Compute stick to speed up this program or the other 2 approaches?

The deep learning-based face detector will be the slowest but most accurate. Haar cascades will be faster but less accurate. HOG is a middle ground between the two. As far as I know dlib does not use the GPU for HOG but does for the deep learning-based detector.

I am getting this error when I use cnn –

MemoryError: std::bad_alloc

Any idea why is it so ? Because everything is working fine in hog

Your machine is running out of memory and it cannot load the CNN. You would need to use the HOG method.

Hi Awesome post.

Is there any way to get this to work on windows/anaconda env?

Many thanks

Sure. You would need to install OpenCV and dlib into your Anaconda environment. OpenCV should be available from the standard Anaconda repo. Both dlib and imutils are pip-installable as well.

I’ve managed to install OpenCV 3, dlib, and imutils, but I am having issues with face_recognition which doesn’t seem to be supported either via pip install or conda install

The face_recognition module is certainly supported via a pip install. Double-check your output and ensure there are no errors. If you are running into issues installing it on your Windows system I would suggest posting on the face_recognition GitHub page.

Thank you , I will try the installation steps, otherwise I am eagerly awaiting the rpi blog!

it worked for me finally! nice program man! thank you!

Congrats on getting the face recognition code up and running!

Why when running last code, on the video file, my MAC is moving soo slow. Is like time stad still. Is this due to my MAC’s memory? Thank you Adrian!

Please read the other comments. I’ve mentioned that if you are using a CPU you should be using the HOG + Linear SVM detection method instead of the CNN face detector to speedup the face detection process.

Adrian, I am wondering if you have experience with cloning the virtual environment? I just built this ENV per your instructions, and am wondering if I can clone it before I use, then I have a “boiler plate” version to copy from for similar projects. Having a baseline image of OpenCV, dlib and face_recognition to spin off new projects from would be great. I looked at the pip freeze and requirements.txt but when I ran that, it does not show the OpenCV or the dlib? If I am off track on this just let me know. I am thinking ahead to other projects with out having completing this one I know.

I don’t really like trying to “clone” a virtual environment. It’s too likely to introduce some sort of errors. Instead, I recommend using a pip freeze and requirements.txt as you noted. However, keep in mind that libraries that are hand compiled and hand installed WILL NOT appear in your pip freeze. You would need to either (1) recompile or reinstall or (2) my preferred method, sym-link the libraries into the site-packages directory of the new virtual environment.

Hello, the articles you publish are very useful even for beginners like me. I would like to know if you can use the facial recognition implemented in the code “recognize_faces_video.py” inside a main, that is: I need my raspberry to recognize ONLY my face and in case of recognition perform other operations.

Thanks in advance

Next week I’ll be discussing how to run this script on your Raspberry Pi. Be sure to keep an eye out for the post!

Guess you made my day!!!! Spent a day and a half compiling dlib without result, when i saw your post. Think it works now.

Thanks

Congrats on getting dlib installed, Bart!

Jesus this is a tutorial with a lot of depth. Thank you for that! I can’t find a use for it at my current job, but in my private life, I’ll try using this!

Thanks Oliver, I’m glad you liked it! 🙂

On the raspberry pi 3, it’s will be work in real time without freeze?

There are a bunch of considerations you’ll want to handle with the Raspberry Pi — I’ll be covering them in next week’s blog post.

I have used HOG detection method to speed up the face detection method and its working fine. but if i want to use ” cnn ” face detector so what configurations are needed for smoothly and fast face recognition process ?

You would want to (1) have a GPU and (2) install and configure dlib with GPU support (this post demonstrates how, refer to the install instructions). I personally recommend NVIDIA/CUDA compatible GPUs.

Hey, you got a typo in a comment.

# load the input image and convert it from RGB (OpenCV ordering)

# to dlib ordering (RGB)

image = cv2.imread(imagePath)

It should be “convert it from BGR…”

Good read, thanks.

Fixed, thanks for catching this!

Thank for great tutorial, my bro!

I installed dlib use GPU. But how to know whether dlib uses GPU or not when running encoding_face.py?

Thank so much

If you are using an NVIDIA GPU you can run

nvidia-smito check GPU utilization. If you are using another GPU you should refer to the docs for it.I’m using Nvidia Geforce GT 705 2GB. When running encoding_face.py with 51 input images + runnig nvidia-smi, i think my dlib did not use GPU. I didn’t see anything with nvidia-smi.

My syntax:

– nvidia-smi.exe

(correct if i wrong)

Are you using Windows? Sorry, I haven’t used Windows in 10+ years. I’m not sure how you would check GPU utilization on Windows. Also keep in mind that the face_recognition module only “unofficially” supports Windows so that might be part of the issue as well.

I ran pip install face_recognition successfully on Windows.

Is it OK If I build OpenCV not use GPU but build dlib use GPU?

That should not be a problem.

Hey Adrian!

Its really wonderful and helped me a lot:)

I am presently running with one issue.

When I have inserted the new dataset images with the size between 10-30KB it was working very fine.

However when I have given the images of 1.3MB “Encode Face” python code was not encoding all the images and in between it slows down.

I am not using any GPU system presently.

Do I need to use HOG + Linear SVM for this as well or is there any other issue ?

Cheers ,

Bhargav

Hey Bhargav — what do you mean by “not encoding all the images”? Are you referring to the

encode_faces.pyscript? Is it taking awhile to encode the faces in the training set? Does it exit with an error?Hi !

It does not exit with an error , however it does not go ahead when I am running encode_faces.py script.

I am not having GPU in my system , is that the reason for this ? If not then can you suggest what can be the possible reasons for this?

Note: While I am running the same code with slower images [3 to 10 KB] , that time it works fine. However the desired accuracy is not being achieved with these images and thus I want to feed high quality images in my dataset.

Be sure to check the activity monitor on your system, in particular the CPU usage. I have a feeling that the script is indeed running, it’s just taking a longer time to process more times. These systems are not magic. If you provide more data to the system it will take longer to process.

Given the hassles around trying to get some of this setup on Windows, is there an OpenCV/Python Docker image that you would recommend for trying out these tutorials?

Inside Practical Python and OpenCV I offer a VM that comes pre-configured with OpenCV/Python. I would suggest starting there (plus you can learn the fundamentals of OpenCV/Python as well).

Hi adrain,

I am having problems with installing dlib and face_recognition module on windows!

Can you help?

thanks

Hey Kartik, I don’t support Windows here on the PyImageSearch blog. I would highly recommend you use a Unix-based system such as Linux (ideally Ubuntu) or macOS. If you take a look at the face_recognition GitHub you’ll find install instructions but the library does not officially support Windows. A link is provided if you want to give it a try, but again, you should consider using Linux here.

Like always thanks for your great tutorial.

I am an avid reader of your blog. I have images of 6000 individuals who appear in the cctv images one time or the other. I want to experiment as explained in this post.

My questions is should i have each individuals name in the file as in indicated in the “dataset” section of “Face recognition project structure” where you listed the file limit to be 10 as indicated in the blog? kindly advise.

I think you might be confusing the

--filelimitswitch to thetreecommand. I usedtreeto show the project directory structure. I am not limiting each person to only 10 example images. I’m allowing all images for each individual to be used. You should do the same.Hey Adrian,

I’ve set up dlib with CUDA support but it seems my GPU might not be up to the task. I have a GeForce GTX 950M 2gb. After about encoding the fourth image I get a runtime error from CUDA: “Error while calling cudaMalloc(&data, n), reason: out of memory”. This makes sense since by the third image I’ve used over 50% of my graphics cards memory. I don’t see how this could possibly scale up to 218 images, even for high-end graphics cards. I have to be doing something wrong here. Any input would be greatly appreciated.

A 2GB card is likely not enough for this task, especially if you are trying to use the CNN face detector.

I also think you have a misunderstanding on how the face embeddings are extracted. All 218 images are not passed in at once, they are passed in as batches. In this case the batch size is trivially one, implying only one image at a time is fed through the network and only one image at a time would be pass through the network on the GPU.

Hey Adrain ,

I really would be glad to know from your side that, for the smooth running of these entire code structure what will be the minimum system requirement in terms of GPU , CPU and Processor .

Look forward to hear from you.

On my system I was running a 3 Ghz processor and 16GB of RAM for the HOG detector. When using the GPU, I had a 3.4Ghz processor, 64GB of RAM (you wouldn’t need that much), and a Titan X GPU. A GPU with at least 6GB of memory is preferable for deep learning tasks.

Cool stuff. I would like to see this with people who are not white. Would you still receive the same results? Just a thought…

The network was trained on millions of images, both white and non-white, and obtains over 99% accuracy on the LFW (mentioned in the post) which includes many non-white examples as well. That said, there is an unconscious bias in some datasets that can lead to models performing not as well on non-whites. Great question!

cnn does not work (gives me a MemoryError: bad allocation) and I try to use Hog but not work, when I run the python file recognize_faces_video.py nothing happens I have 8 GB ram with a usable 3.49 GB ram in a windows 10 32 bits

Could you be a bit more specific regarding “nothing happens”? Does a window open up on your screen but the frame does not process? Does the script automatically exit? Do you receive an error?

Adrian, Thanks a lot for your great blog post.

How many pics per actor do you need to make your streaming movie detection work?

I saw that you had like 22 per actor. Is one per actor even enough?

You can get away with one image per person for highly simplistic projects but you should aim to be much higher, ideally in the 20-100 range.

Whats the implication of using less images? Less chance of an accurate match?

Thanks a lot

You illustrated a detailed topic in a the most clear way

With your talent, I would understand the Relativity Theory if you post an article about it 🙂

Thanks Walid 🙂

Good post. Love seeing people using deep learning+machine learning techniques in clever ways.

Thanks so much Jeremiah!

Hi Adrian,

I am trying to use your code for facerecognition.

When using an image of my baby, it seems that your code doesnt run the conversion into BGR in order to make the boxes on the face and to save them into the pickle.

If i use your own dataset, with actors from that movie, the code will run. My question to you is, did you edit the photos before runnign the code.

If yes, what you did in order to run yoru face recognition code?

Thank you,

1. Regarding the photos of your baby (congrats!) are you saying that no faces are detected? It’s sounds like that is likely the problem. Did you try the HOG and CNN methods as well?

2. No image editing was performed at all on the code. They were downloaded to their respective directories and then I went in and manually removed irrelevant ones.

Have changed the model from cnn to hog, is working, but there are some error–>> Invalid SOS parameters for sequential JPEG. Should i edit the pics before running the code?

Those are not OpenCV/dlib errors. They are actually warnings from the libraries used to load the images. It should not be an issue.

After practicing this tutorial, i have a question. Why does each person have multiple 128-d measurements? Why do we summarize into 1?

If distance between each above 128-d measurements > 0,6 or similar, is the result wrong when inspecting the new input image?

Each face in an image ins quantified with as 128-d feature vector. These feature vectors cannot be combined into one, as again, each face input to the system is represented by a 128-d vector. As for your second question, if the distance between an input face and a set of faces in a dataset is too large, the face is marked as “Unknown”.

If distance between 2 encoding input >0,6?

I’m not sure what you are asking. Could you clarify?

Hi Adrian

Does the model need more training data like 30 or 40 images for each person?

I tested the model with addition of my images(total 10) to the existing dataset and tested the model. Model was not accurate and was not able to recognize my images correctly.

Regards

Gaurav

I typically recommend 20-100 images per person. The more you can get, the better. Ideally these images should be representative of where the system will be deployed (i.e., lighting conditions, viewing angle, etc.)

Hi adrian

i would like to add new images or delete images in database and when i do it then prior images that exist in the database are stored in encodings.pickle and only for new images encode_faces.py be done.

I want to reduce the time to save the encoding in the encoding.pickle.

Otherwise, a lot of time should be spent even adding a new image.

I would suggest you:

1. Use encode_faces.py as you normally would but each time you run it, create a new pickle file.

2. When you’re ready to recognize faces, loop over all pickle files, load them, and create a “data” variable that stores all information from all pickle files.

Hi Adrian

May I know how to combine multiple pickles into 1 variable?

Thank You

If you’ve never merged two lists I would recommend you read the following tutorial on StackOverflow.

From there:

1. Load both picked’d dictionaries

2. Append the lists together

3. Create a new dictionary on Lines 56-59 and write the appended lists to file

Can you please put an example appending the actual encoding.pickle files?

I will consider it but I cannot guarantee I will cover it. I’ll likely end up covering it in my Computer Vision + Raspberry Pi book though!

Can I save encodings in a database, for example mysql and update each time with new images?

You can save the encodings in whatever database you like, whether that’s a CSV file, JSON file, a mySQL database, a key-value database, etc. But if you add new images to the database you’ll need to “retrain” the model.

Why we need “retrain”? I think the model already trained. The model like feature extractor, isn’t it? We are only calculating feature vectors of each image. If we add new images to database, I think the 128-d feature vector should be calculate and append pickle file. Am I wrong? Thanks!

You are correct but keep in mind that Dauy’s original question was in context of using a database server instead of a pickle file. They would have to load those values back into the k-NN search.

Hi Adrian

Thank you so much your post

I have a problem I installed dlib easily but While I was installing face_recognition I have cmake error:

CMake must be installed to build the following extensions: dlib