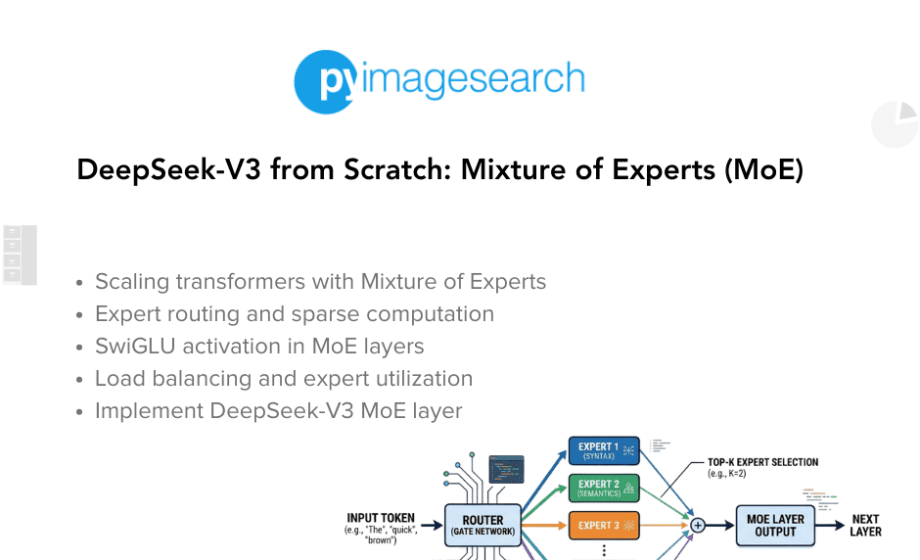

Table of Contents DeepSeek-V3 from Scratch: Mixture of Experts (MoE) The Scaling Challenge in Neural Networks Mixture of Experts (MoE): Mathematical Foundation and Routing Mechanism SwiGLU Activation in DeepSeek-V3: Improving MoE Non-Linearity Shared Expert in DeepSeek-V3: Universal Processing in MoE…

Deep Learning

DeepSeek

Machine Learning

Neural Networks

Tutorial

DeepSeek-V3 from Scratch: Mixture of Experts (MoE)

March 23, 2026

Read More of DeepSeek-V3 from Scratch: Mixture of Experts (MoE)

PyImageConf

PyImageConf