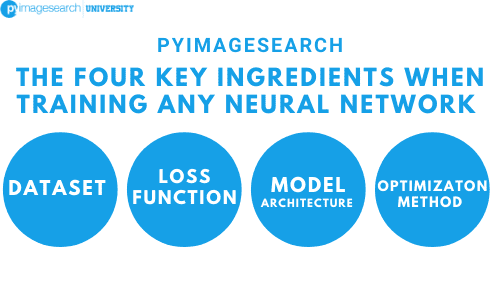

You might have started to notice a pattern in our Python code examples when training neural networks. There are four main ingredients you need to put together in your own neural network and deep learning algorithm: a dataset, a model/architecture, a loss function, and an optimization method. We’ll review each of these ingredients below.

Dataset

The dataset is the first ingredient in training a neural network — the data itself along with the problem we are trying to solve define our end goals. For example, are we using neural networks to perform a regression analysis to predict the value of homes in a specific suburb in 20 years? Is our goal to perform unsupervised learning, such as dimensionality reduction? Or are we trying to perform classification?

In the context of this book, we’re strictly focusing on image classification; however, the combination of your dataset and the problem you are trying to solve influences your choice in loss function, network architecture, and optimization method used to train the model. Usually, we have little choice in our dataset (unless you’re working on a hobby project) — we are given a dataset with some expectation on what the results from our project should be. It is then up to us to train a machine learning model on the dataset to perform well on the given task.

Loss Function

Given our dataset and target goal, we need to define a loss function that aligns with the problem we are trying to solve. In nearly all image classification problems using deep learning, we’ll be using cross-entropy loss. For >2 classes we call this categorical cross-entropy. For two class problems, we call the loss binary cross-entropy.

Model/Architecture

Your network architecture can be considered the first actual “choice” you have to make as an ingredient. Your dataset is likely chosen for you (or at least you’ve decided that you want to work with a given dataset). And if you’re performing classification, you’ll in all likelihood be using cross-entropy as your loss function.

However, your network architecture can vary dramatically, especially when with which optimization method you choose to train your network. After taking the time to explore your dataset and look at:

- How many data points you have.

- The number of classes.

- How similar/dissimilar the classes are.

- The intra-class variance.

You should start to develop a “feel” for a network architecture you are going to use. This takes practice as deep learning is part science, part art.

Keep in mind that the number of layers and nodes in your network architecture (along with any type of regularization) is likely to change as you perform more and more experiments. The more results you gather, the better equipped you are to make informed decisions on which techniques to try next.

Optimization Method

The final ingredient is to define an optimization method. Stochastic Gradient Descent is used quite often. Other optimization methods exist, including RMSprop (Hinton, Neural Networks for Machine Learning), Adagrad (Duchi, Hazan, and Singer, 2011), Adadelta (Zeiler, 2012), and Adam (Kingma and Ba, 2014); however, these are more advanced optimization methods.

Even despite all these newer optimization methods, SGD is still the workhorse of deep learning — most neural networks are trained via SGD, including the networks obtaining state-of-the-art accuracy on challenging image datasets such as ImageNet.

When training deep learning networks, especially when you’re first getting started and learning the ropes, SGD should be your optimizer of choice. You then need to set a proper learning rate and regularization strength, the total number of epochs the network should be trained for, and whether or not momentum (and if so, which value) or Nesterov acceleration should be used. Take the time to experiment with SGD as much as you possibly can and become comfortable with tuning the parameters.

Becoming familiar with a given optimization algorithm is similar to mastering how to drive a car — you drive your own car better than other people’s cars because you’ve spent so much time driving it; you understand your car and its intricacies. Oftentimes, a given optimizer is chosen to train a network on a dataset not because the optimizer itself is better, but because the driver (i.e., deep learning practitioner) is more familiar with the optimizer and understands the “art” behind tuning its respective parameters.

Keep in mind that obtaining a reasonably performing neural network on even a small/medium dataset can take 10’s to 100’s of experiments even for advanced deep learning users — don’t be discouraged when your network isn’t performing extremely well right out of the gate. Becoming proficient in deep learning will require an investment of your time and many experiments — but it will be worth it once you master how these ingredients come together.

What's next? We recommend PyImageSearch University.

86+ total classes • 115+ hours hours of on-demand code walkthrough videos • Last updated: June 2026

★★★★★ 4.84 (128 Ratings) • 16,000+ Students Enrolled

I strongly believe that if you had the right teacher you could master computer vision and deep learning.

Do you think learning computer vision and deep learning has to be time-consuming, overwhelming, and complicated? Or has to involve complex mathematics and equations? Or requires a degree in computer science?

That’s not the case.

All you need to master computer vision and deep learning is for someone to explain things to you in simple, intuitive terms. And that’s exactly what I do. My mission is to change education and how complex Artificial Intelligence topics are taught.

If you're serious about learning computer vision, your next stop should be PyImageSearch University, the most comprehensive computer vision, deep learning, and OpenCV course online today. Here you’ll learn how to successfully and confidently apply computer vision to your work, research, and projects. Join me in computer vision mastery.

Inside PyImageSearch University you'll find:

- ✓ 86+ courses on essential computer vision, deep learning, and OpenCV topics

- ✓ 86 Certificates of Completion

- ✓ 115+ hours hours of on-demand video

- ✓ Brand new courses released regularly, ensuring you can keep up with state-of-the-art techniques

- ✓ Pre-configured Jupyter Notebooks in Google Colab

- ✓ Run all code examples in your web browser — works on Windows, macOS, and Linux (no dev environment configuration required!)

- ✓ Access to centralized code repos for all 540+ tutorials on PyImageSearch

- ✓ Easy one-click downloads for code, datasets, pre-trained models, etc.

- ✓ Access on mobile, laptop, desktop, etc.

Join the PyImageSearch Newsletter and Grab My FREE 17-page Resource Guide PDF

Enter your email address below to join the PyImageSearch Newsletter and download my FREE 17-page Resource Guide PDF on Computer Vision, OpenCV, and Deep Learning.

Comment section

Hey, Adrian Rosebrock here, author and creator of PyImageSearch. While I love hearing from readers, a couple years ago I made the tough decision to no longer offer 1:1 help over blog post comments.

At the time I was receiving 200+ emails per day and another 100+ blog post comments. I simply did not have the time to moderate and respond to them all, and the sheer volume of requests was taking a toll on me.

Instead, my goal is to do the most good for the computer vision, deep learning, and OpenCV community at large by focusing my time on authoring high-quality blog posts, tutorials, and books/courses.

If you need help learning computer vision and deep learning, I suggest you refer to my full catalog of books and courses — they have helped tens of thousands of developers, students, and researchers just like yourself learn Computer Vision, Deep Learning, and OpenCV.

Click here to browse my full catalog.